This is an experience report sharing the findings and learnings of an agile coach trying to make small changes to a large organization, only to fail to change much of anything. While they journey and the struggle goes on to grow and evolve, there is still much to learn.

1. Setting the scene

Four-ish years ago my organization started out on this “new” fad; an Agile Transformation. In doing so they opted for the (in)famous SAFe framework as a blueprint for the future IT organization. An intricate part of this transformation was to reorganize the +1,000 employees in IT into the Agile Release Train concept (team of teams), which we now have 18 of in total.

Our Agile Transformation and reorganization is an IT thing, and unfortunately doesn’t involve the entire organization. However, the Agile Release Train that is the ground for this experience report has managed to mix Business and IT seamlessly into one unit – the “collective we” (as opposed to the “us and them”), they’ve been doing the agile dance for ~4 years and hold approximately 150 hard-working, dedicated people from both Business and IT. One of their characteristics as described by one in the leadership team was that they love structure, clear processes, Excel and numbers. “They love counting the beans, you know?” he said.

I, on the other hand, consider myself super unstructured, I’m not very fond of Excel per se and while numbers are great, I like conversations and talking way more. Opposites of one another some might say.

Disclaimer: Everything I share in this experience report is subjective and is based on my perspectives, interpretations and analysis (this is the CMA[1]-part if that wasn’t obvious)

Enough with setting the scene, let’s get on with the actual story!

2. Once upon a time (the problem – part 1)

… in an Agile Release Train (ART) not that far away really, the so-called “Bean Counters” were chucking away on their ever-growing backlog. Having done this for more than 3 years they had built a solid planning habit.

Once a quarter they would plan for the next quarter – in SAFe terms this would be the Program Increment (PI). However, prior to this magnificent planning session they would spend several days breaking down work items into very granular slices of work that could be assigned to individuals. This “breaking down”-ritual was followed by the classic “let’s estimate”-ritual, where they would be estimating the upcoming work in – what was perceived – high precision in the well-known format; the story point (where 1 story point would be equal to 1 day’s work).

Finally, when planning was due, based on their capacity for the coming PI, they would create the plan – detailed and filled near maximum capacity. Aiming for 100% utilization – Perfect!

While this worked flawlessly from a pure process perspective, reality was they didn’t manage to hit the target or deliver according to the plan. Estimates were unfortunately, yet expected, way off. The plan got changed almost immediately after PI planning and at the end of a PI roughly 60% of what had been planned and committed got delivered.

Interestingly enough, the Bean Counters had been able to plan and execute in this particular way consistently for multiple consecutive PI’s. Very little variation could be measured or observed.

Then one day an agile coach ventured into this ART and said the powerful words: “Your plans are bad, your estimates are bad, and you should feel bad too!” And so they begged, “Please teach us” and everyone lived happily ever after.

Right, that’s not how it happened. Not even close.

3. Meeting the Beans counters (the problem – part 2)

I met the Bean Counters in March 2021 as their previous agile coach moved on to another organization and they needed (?) a new one to help them progress on their agile journey. Looking into their respective backlogs and their previous plans, I got a good sense of what was the culprit to this poor “planned vs. delivered” thing.

Through the first 5-6 months I tried to cautiously approach the ART from various angles, and when bringing my observations and their data into conversations, I was met with – in my opinion – an extremely fixed mindset and the classical nonsensical arguments along the lines of “this would never work here, because reasons” and “we don’t trust the data”. I felt ignored and my observations discarded even though I was supposed to be the expert in this agile domain thing.

This was super frustrating but puzzling to me, as they had asked for an agile coach to help them, but wouldn’t use me or my experience? Boggled, my mind was. Could this be because of trust, or rather lack thereof? Was my ethos not strong enough? Were my suggestions perceived as too radical or stupid?

4. Preparing the Beans

In order to make any form of dent in behavior, I had to get some tangible, objective stuff the Bean Counters would trust – or at least that was my hypothesis. As they’d previously questioned their own data (and thus my observations) we had one of those “grown-up talks” manifested in a workshop about the importance of data, who’s responsible for keeping stuff like work items on the board updated and so on. We spent 3 wonderful hours diving into the theory of “Ask not what you can do for metrics, but what metrics can do for you!” as well as gaze on their own data. We explored patterns and anti-patterns and some strategies on how to deal with those. At the end of the workshop, the respective teams committed to work with one or more metrics (such as WIP, cycle time, velocity) and they discussed and outlined first steps/experiments to change their way of working during the remainder of the PI, to get used to working with their own data.

It was not without friction we concluded the workshop, as some felt themselves out of a job; “If I’m not supposed to update everything, then what am I supposed to do?” whereas others saw this task of updating a status of a work item as a tremendous task; “We cannot ask this of our people – they are already super duper busy doing important busy work”.

Having data would of course not alone do the trick. We needed something more, something amazing. This time I got lucky as this ART was already working with the Kata method[2], so me and ART Leadership drafted a target condition around flow as we had a hypothesis that this would help teams focus on minimizing waste, thus delivering more and hopefully be on target according to their own plans. An important part of the target condition was to onboard teams on the concept of flow and how to establish this in their context (including them designing their own experiments on how to minimize one or more wastes in their process), as well as give them tools and insights when in planning. One of those tools being “Yesterday’s Weather”.

In short, Yesterday’s Weather goes a little something like this: “All things being more or less equal, if you – as a team – delivered 10 user stories last iteration, you’re most likely able to deliver the same in the coming.” And the beauty here is; you don’t even have to estimate! It’s simple and effective. Essentially it should be a counteraction to the planning fallacy helping with a reference for forecasting.

Of course this wasn’t without nay-sayers chiming in with the “doesn’t work because our backlog items are not of the same size.” While it is true that with more uniform sized backlog items makes the argument easier to understand, the law of large numbers[3] should even out the variations in size when looking at an entire PI’s scope worth. In addition, there was ample evidence of Yesterday’s Weather working flawlessly from the many previous PI’s. In the end I reckon we convinced most teams to at least try Yesterday’s Weather as an experiment.

Finally, as we were closing in on PI Planning, together with the ART leadership, I had several sessions with both Product Owners and Scrum Masters on the weather forecast for Yesterday’s Weather, as well as the do’s and don’ts. We were aiming for awareness, desire, knowledge and ability (#ADKAR) prior to The Trial.

My confidence level was ultra-high. I mean, what could possibly go wrong, right?

5. The Trial – will it stand?

It was an early Thursday morning in March that marked the first of two days with the Bean Counters doing their second PI Planning of 2022. It all began with an amazing kick-off by the Business Owner taking us through the vision, the strategy and the roadmap. “You are amazing!” he said to the Bean Counters. This was followed by a walkthrough of focus areas in greater detail by the Product Manager. “Can you feel it? We’re doing it!! YES!!!” he offers enthusiastically. And finally, the Release Train Engineer took everyone through the practicalities including the rules of engagement related to our darling – Yesterday’s Weather. “Remember Yesterday’s Weather peoples! Please DO NOT commit to more than what you managed to deliver last PI as we’ve already agreed on, and for the love of all that’s holy, DO NOT put any features in the last iteration, as we want to save that for some awesome deliciousness. Now, if you have any questions, please check in with me or our friendly neighborhood agile coach or consult our pixie guide on the topic. May the planning be ever in your favor!”

3… 2… 1… BANG! and the teams ran off to do their planning in this new, simple and powerful way.

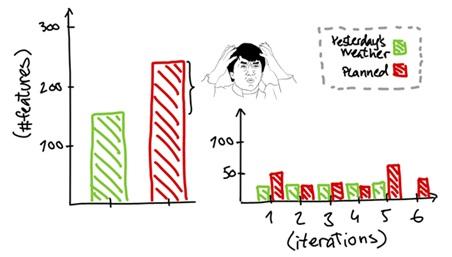

Hours went by like seconds and just like that we were at the end of day 1 of planning reviewing the draft plan. Puzzled and a bit surprised, I blurted out “What the hell happened?” Not only were we 60% over-committed, but most of scope (like 80%) was also planned for delivery the very last iteration (6 of 5).

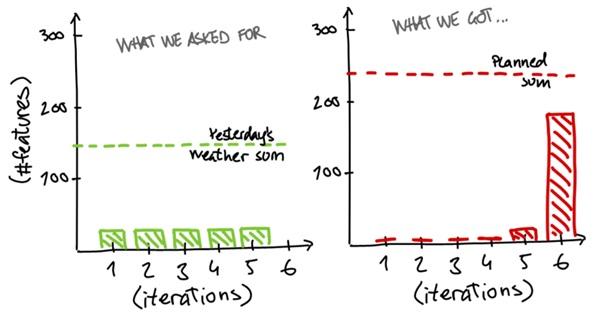

The Yesterday’s Weather we asked for and drilled with the teams, and what we got after first day of planning

The Yesterday’s Weather we asked for and drilled with the teams, and what we got after first day of planning

Alright, one day down, one to go and there was still hope having one full day of planning left. So we re-iterated that “for the love of whatever deity you believe in – or don’t believe in – please DO NOT commit to more than we’ve talked about so many times, and please DO NOT put stuff into the last iteration. We’re begging here…”

3… 2… 1… BANG! On with the circus.

This time, hours did not go by like seconds and I was more focused on monitoring teams planning behavior unlike the previous day where I suppose I was mentally absent. Unfortunately to much avail as when going through the final plan review, we were – albeit down – on 50% over-commitment but still a bit over 20% of scope planned for the last iteration. On the bright side, that 6th iteration scope was now spread more evenly over the entire PI, yay…

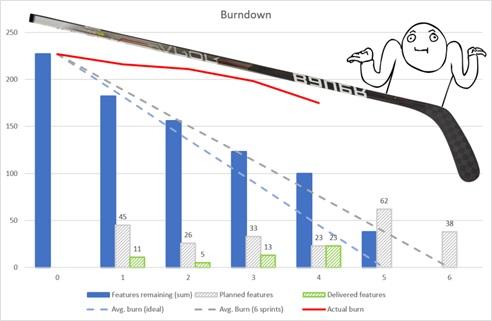

Total scope planned for the PI and the distribution of planned work across six iterations

Total scope planned for the PI and the distribution of planned work across six iterations

To add insult to injury we ended the PI Planning with a vote of confidence, and out of around 150 people we landed on a solid 4 out of 5, meaning we – as a collective – believe in the plan despite failing horribly adhering to Yesterday’s Weather.

6. My take-away beans

During the vote of confidence I gave it a 2 indicating that I couldn’t support the plan as we were still way over-committed and had more than 20% scope in the last iteration. While my vote was noticed and acknowledged, it didn’t change anything which I suppose was as expected.

Could I be the only one thinking that over-committing was a bad thing? I mean, was I the only one focused so much on predictability? I had to find out.

I reached out to one of the Product Owners whose team had over-committed quite a bit trying to understand what happened. In addition, the Product Owner had during the PI-planning mentioned that this Yesterday’s Weather was awesome and worked perfectly – puzzled I was.

“The approach of Yesterday´s Weather was helpful for me, because it forced me to cut down on the number of features that I took in the PI planning, so we had a lower number of features to begin with and were able to focus on the limited number of features instead of preparing a lot of features, which we will not be work on anyway. At the same time it helped to make it visible to the stakeholders, that we cannot take an unlimited number of features in, even though they are important. It made it more obvious that we need to be realistic.”

~ Anonymous Product Owner, 2022

Wait? Was I actually getting somewhere after all? I mean, the response smelled like Yesterday’s Weather, albeit with less-than-perfect implementation, and perhaps even a shift in mindset.

I also had to check in with ART Leadership and Business Owner to understand their perspectives. While we could all agree that it was not cool to have this massive over-commitment and scope-in-the-last-iteration going on, they appear to be considerably more conservative and careful not to push people (too hard?) than I had originally interpreted. Although I am not super well versed in the politics of my organization, I can certainly understand their perspectives and sympathize with the delicacy of the existing culture and engrained behaviors. So, I fully respect their decisions even though I would love it if we could do just a wee bit better. Maybe next PI?

My reflections are as follows:

- I need to have more empathy for leadership and the people doing the work. I thought I was doing a great job at this, but I must set aside the ambitions that I have on behalf of the organization – or I at least have to try. And while I acknowledge that change takes time, I need to accept that people have different change readiness. In other words; better alignment of realistic expectations.

- The planning fallacy and optimism bias is difficult to combat. While Yesterday’s Weather and our attempt to get people to commit to specific deliverables during the PI are considered counteractions to the fallacy and bias, it needs more time to work – possible many, many iterations of falling back into old planning behaviors.

- Rather than experimenting with too many different changes (because I do other stuff in the ART too), I should strive to change one behavior at a time. And once that new behavior is ingrained, I can try and change the next and so on. Small(er) steps and perhaps a slightly less ambitious timeframe.

- If I want to help the Bean Counters even more, I need to get closer to the people in the teams. Related to this experience report, I’ve primarily done exercises around creating transparency and some training in how this Yesterday’s Weather works. However, I’ve mainly engaged with ART leadership and Scrum Masters and Product Owners, assuming they would bring the information to the teams. Broaden my reach, I must.

- On a similar note, I need to figure out how to nail the whole “what’s in it for me?” part so I don’t have to try and change people (which in itself is impossible if they don’t want to change), but inspire or create the desire for people to want to change themselves.

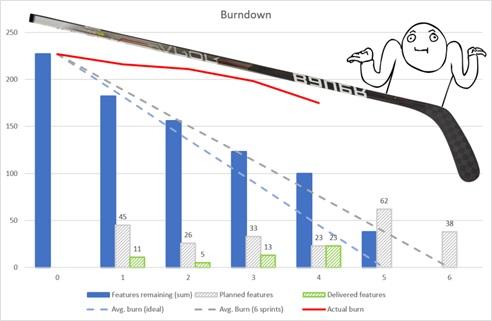

- For the many past PI’s, the feature delivery has looked like the classic hockey stick, where nothing gets delivered until the very end, where all of a sudden, a ton of stuff gets done. Anyhow, maybe we should consider if different slicing of features and work in general, and how we organize around work can have an impact on our predictability. There is something in our current structure that does not directly enable this, au contraire, but perhaps it’s time to start those conversations too.

- Repeat, repeat and then repeat some more.

… Oh, and old habits die hard!

(P.S. Just for the record, I never told them they should feel bad – I added that for the dramatic effect)

7. Post Trial

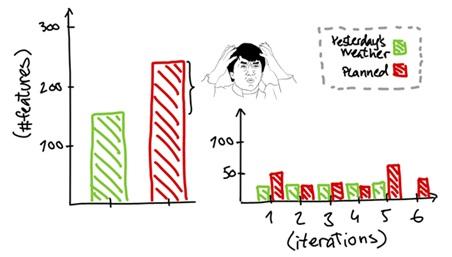

It’s now been a few months, and despite the high confidence vote it seems like they’re displaying the same old behavior, not delivering according to plan.

Will it hockey stick? Remains to be seen…

Will it hockey stick? Remains to be seen…

However, now we are having different conversations around the team’s burndown and planning. We are asking Scrum Master and Product Owners to consider what they can do to help the teams get back on track. We have managed to turn it around to be a conversation and reflection on how to improve, or an “improvement game over blame game” as the RTE so eloquently put it.

So far, I have invited the Scrum Masters to reflect on the following:

- “What can I do to help the team get back on track on the plan?”

- “What help can I ask for, to get me and your team closer to the target?”

- “What conversations should I perhaps have with the PO?”

- “How can I coach the team to get stuff done sooner rather than later?”

- “What lever could/should I pull to get my team to focus and finish stuff?”

As for the Product Owners, I have invited them to reflect on:

- “What can I do to help the team get back on track?”

- “How can I prioritize the backlog, so the team focuses on finishing work in progress, and not start new work?”

- “Where can I make adjustments to features / scope / priorities to help the team become more predictable?”

- “What questions you can ask your team to ensure focus and get stuff finished rather than working on multiple things in parallel, or solve / mitigate whatever is perhaps holding the team back (blockers, impediments etc.)”

Who knows, maybe next year there will be an experience report on super predictable bean counters 🤷♂️

8. About the author

I’m Benjamin, and I’ve been working within the agile space since 2014 across several organizations, following a career in the VoIP industry and software development. In recent years, my interests have been very much on the human side of IT, and currently I’m leaning heavily into the coaching stance of my professional career as agile coach.

9. Acknowledgements

I would like to thank Frank “FrankO” Olsen for being an inspiration and north star during the brief period we got to work together. He has so much agile swag it’s uncanny. Plus, he basically told me “do this, or else!”

Daniel “Man of Steel” Ståhl for being my patient shepherd. Without him I doubt this experience would have been coherent (assuming it is actually coherent in its present form). @Daniel, I don’t know how you did it, but you managed to keep me grounded and not go gung ho all over the place. #veryrespect, #muchcredits, #wow!

The Bean Counters of course! Without them, this experience wouldn’t have been, duh! (Please don’t replace me – I really like working with you)

Finally, I would like to thank you who’s reading this experience report. It’s my first – hope you liked it. I had fun writing it.

RESOURCES

[1] Cover My Ass

[2] https://leansixsigmainstitute.org/kata/

[3] https://en.wikipedia.org/wiki/Law_of_large_numbers