The Defense Industry has been slower in adopting agile development than other industries. There are reasons for this. Some realities of government contracting run counter to core elements of the Agile Manifesto. Many find the formalities of defense contracting incompatible with the loose and dynamic nature of agile. Fortunately, the Defense Industry wants to be more agile, which keeps those of us on the inside laboring, learning, and innovating for progress along those lines.

In this paper, I draw from 10 years of experience applying agile methods at General Dynamics to identify some fundamental obstacles to practicing agile in the Defense Industry, and I describe how we’ve adapted to those obstacles through our evolution of a customized agile framework.

1. INTRODUCTION

Anyone claiming they’ve figured out how to do agile in the Defense Industry today should be questioned. I’ve been using, promoting, teaching, and refining agile at General Dynamics since 2005, and for all of our successes, it feels like we’ve made as many mistakes. Many of our programs, as I’m sure is the case at all defense contractors in 2015, are still working within the constructs of Waterfall-inspired models. The idea that agile is something you do in addition to all the traditional stuff is prevalent. And a lot of teams claim to be doing agile without really knowing what that means.

This state of affairs is not because the industry lacks innovative people or good leaders. I think the reasons are deeper than that. Unlike the commercial software industry, where applications are generally produced before consumers decide to sign-up, download, or purchase, the Defense Industry is driven primarily by upfront government contracts to build and deliver systems. This dynamic, along with the formalities of government business, encourages behavior that runs counter to some key elements of the Agile Manifesto. It doesn’t make agile impossible, but it makes it hard.

This paper explains some of the fundamental obstacles to agile that I’ve observed within the Defense Industry, and describes some of the techniques we’ve used at General Dynamics to apply agile in that environment. The scope of our efforts thus far has been too modest to have changed any significant portion of the industry. But I hope we’ve progressed far enough in our work that someone struggling in those same trenches might read this and find the idea they need for their next breakthrough.

2. Obstacles to the Agile Manifesto

In 2001, a group of software experts published a set of four value statements called the Agile Manifesto. This brief document is the kernel of today’s agile movement. There are numerous Agile processes and frameworks, and countless individual instantiations of those processes and frameworks by different teams and organizations. The only universal way to gauge how conducive an environment is to what we call “agile” is to consider how conducive that environment is to the value statements of the Agile Manifesto.

For reference, the authors of the Agile Manifesto state that they value:

- Individuals and interactions over processes and tools.

- Working software over comprehensive documentation.

- Customer collaboration over contract negotiation.

- Responding to change over following a plan.

I’ve found that the realities of defense contracting encourage the inversion of certain statements of the Agile Manifesto.

2.1 Processes and tools over individuals and interactions

Defense Industry contractual practices have to support the development of large, complex systems that involve multiple contractors. Defense contracts are generally awarded before the contractors start their development. To clarify who is required to develop what, and to prevent potential overuses of tax payer money, these contracts need to include formal and technically exacting statements of work for the contractors. It follows that, in performing the contracts, contractors need to show formal proof to evaluators that they did all of their specified work and, in cases of cost plus contracts, did not spend money on additional, unwanted work.

One of the outcomes of this is the common Defense Industry practice of customers providing formal requirements specifications at the outset of contracts, and stipulating that contractors prove how their work products meet those requirements through the use of traceability. Given the technical complexity of the source requirements and the derived work products, and the need to permute traceability matrices to meet the needs of different evaluators, the only practical means for contractors to accomplish this is through the use sophisticated requirements management processes and tools. Trying to do a lot of this with individuals and interactions would result in an expensive mess.

2.2 Comprehensive documentation over working software

While today’s defense systems are increasingly comprised of software functionality, the heritage of defense contracting is steeped in hardware development. Because of the cost and lead time required to build hardware, hardware engineers often do their work in relatively large batches. For example, they will design, construct, and test a circuit board in one large cycle of work, instead of designing, constructing, and testing features of the board in small iterations. Because of the money and time committed to these large batches of work, the hardware development process usually progresses through a series of gates, where comprehensive documentation is required at the early gates to help prevent costly mistakes at the later gates. While that upfront documentation has a cost, it’s worth it if it prevents a large batch of hardware engineering from being spoiled.

This gated, large batch approach to hardware development is the inspiration behind what is called the Waterfall software development model. The industry codified the Waterfall model several decades ago, when the complexity of software was orders of magnitude less than it is today and when most engineers drew their experience from hardware development. Today’s agile movement is a revolt against the Waterfall model. The commercial software industry, which initially operated under Waterfall influences, has evolved a lot in recent years. But the realities of government contracting make it harder for the Defense Industry to change process models.

Take for example the common stipulation that defense contractors provide comprehensive software requirements specifications and design documents at early phase gates of programs. While many in the Defense Industry today admit that this “big design upfront” approach is sub-optimal for modern software development, far fewer are willing to upset the applecart by insisting on a fundamentally different approach; that would require rethinking and revising some government processes and paperwork, and contractors don’t want to risk losing business for bucking the customer’s established rules. As such, defense contracts often encourage software engineers to promote comprehensive documentation over working software.

2.3 Following a plan over responding to change

Customers of commercial software applications generally commit to a vendor’s offering after the vendor has developed the product. When we download an app or sign-up for a new service, we get a working product that we can start using right away. We don’t care if the vendor’s development effort finished behind or ahead of its original plan. We commit to a vendor’s offering because of its value compared to competing offerings in the marketplace. However the vendor got the product to market is their business.

For software developed in the Defense Industry, a customer often commits to a vendor prior to the start of the development effort, sometimes in a cost plus arrangement where it agrees to pay the vendor for cost overruns. And even when that’s not the case (e.g. vendor funded developments), defense contractors may have to make their project plans available to government evaluators tasked with ensuring the integrity and security of the end product. As such, the Defense Industry is permeated with the notion that a project needs to have a baseline plan established at the outset, and that project management needs to show progress toward that baseline plan throughout execution. This is serious business; customers may pull funding from projects that fall behind plan.

This results in overhead in for project managers; it takes effort to create a detailed upfront plan, it takes effort to provide regular status in relation to that upfront plan, and it takes effort to re-baseline the original plan after everyone agrees it needs to change to remain relevant. But beyond creating extra work for managers, an insistence on tracking to an upfront plan incentivizes anti-agile behavior. When the leading question at project reviews is “how well is the team performing compared to the baseline plan”, the project manager is bound to focus on that over adapting to evolving customer needs. Thus, following a plan becomes more important than responding to change.

3. Highlights of our Agile Framework

When I first started applying agile methods at General Dynamics, most of what of was written about agile was focused on the development of commercial software applications. I was drawn to the ideas, but felt they needed some reframing before they could be they could be readily digested within the Defense Industry. So I created a process model that codified the agile practices that I was piloting on the project I was working on, and used that to derive generalized process artifacts for wider distribution. This early work was heavy on terminology and prescriptive rules, and was specialized for a certain type of product development. In the years that followed, with each new project, we further refined things, whittling down the content to only what was simple and most essential. We call the result of this work our agile framework.

This framework today is a small buffet of terms and concepts that provide abstract guidance to General Dynamics projects wanting to use agile. We also maintain a custom configuration of IBM’s Collaborative Lifecycle Management (CLM) tool suite for use in implementing the framework. Neither the framework nor the supporting tool suite is forced on anyone; we let interested projects decide which pieces they want to use, providing recommendations based on past experience and even doing some salesmanship, but never usurping the ownership of the project team’s work.

The following sub-sections describe some of the unique elements of our agile framework that have proven particularly helpful in practice.

3.1 Use case modeling driving product and project definition

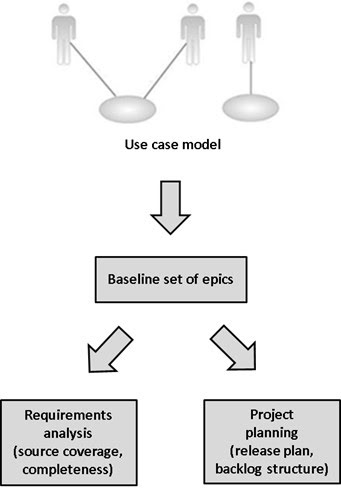

The term “use case” carries some baggage with it in different engineering circles. Defense Industry system engineers know use cases as key primitives in the discipline of system modeling. Some agile proponents might describe them as relics of “big design upfront” processes that lead to highly exacting diagrams that are more trouble than they’re worth. In our framework, we employ a light weight use case modeling process, which includes the definition of external actors and their relations to the use cases themselves, to achieve an agreed upon breadth-over-depth vision of the end product. We do this exercise at the outset of a project, before we start iterative development, and conclude it with a peer review of the use case model by a fairly broad set of stakeholders. The resultant use cases then become the product’s epics.

An “epic” is a standard term in the agile lexicon meaning a high-level capability that serves as an umbrella for a set of more granular capabilities called “stories”. Teams may define additional epics beyond their use cases, such as infrastructural or platform topics that don’t fit the use case paradigm but are still important capability groupings. Even in those situations, though, the use cases still serve as the most obvious and functionality relevant epics.

Figure 1. Use Cases as Epics

There are reasons we like this use case modeling approach. For one, “use case” is a more familiar term than “epic” to many in the Defense Industry. But more importantly, a well-coached use case modeling exercise returns a great deal of value, in terms of completeness and clarification of vision, from the relatively small investment of time it requires. It gets us to a set of epics that tends to be more robust than one derived in a less structured way.

Having a robust set of epics at the outset of a project has real advantages. From a product definition perspective, we use them as “buckets” to show customers very early on that we’ve accounted for all of their requirements. This is a light weight and generally acceptable method for meeting the coverage criteria of the initial system requirements milestone review. Even on projects where we don’t have formal customer requirements or milestone reviews, such as internally funded projects, a set of epics derived early on from a use case model helps to get the stakeholders on the same page. From a project definition perspective, having a good set of epics at the outset aids greatly in planning, prioritizing, and organizing the project at the top levels. It’s true that the epics may change over time, but we’ve found use cases to be more stable than other high level project constructs, such as targeted delivery dates, making them good objects upon which to arrange a project plan.

3.2 Requirements analysis driving story definition

Like use cases, the concept of requirements management has some negative connotations within the agile community. The idea of a monolithic database of “shall statements”, provided upfront and to be implemented and verified in one large Waterfall cycle, is the antithesis of an agile process that embraces small batches of work, evolving product definition, and frequent changes in priority. The agile movement’s answer to traditional requirements management is centered on the concept of a story. A story is essentially a product requirement, albeit phrased in a fashion that evokes more of a stakeholder need than a legally binding directive. But the common approach to managing stories is quite different than that of traditional requirements. Agile teams tend to look for the least complicated method of capturing stakeholder needs, which can literally mean eschewing a requirements database in favor of sticky notes on a whiteboard.

As mentioned earlier in this paper, the need for full requirements traceability stipulated in many defense contracts effectively precludes the sticky note approach. As such, our agile framework at General Dynamics embraces the use of robust requirements management processes and tools. The result isn’t a heavy hindrance, though, but a streamlined process for both product validation and story generation.

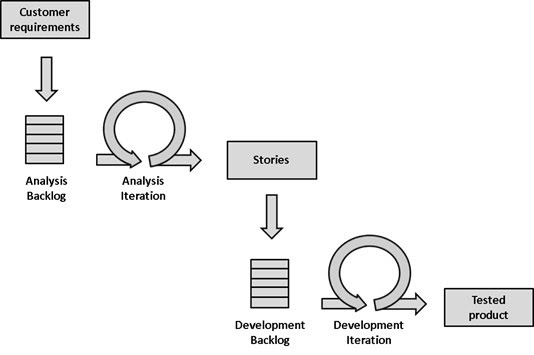

Our requirements process starts with a triage of the customer requirements. Considering factors such as feature priority, architectural significance, and risk, the team chooses a subset of the customer requirements for input into an iteration of requirements analysis. This analysis iteration, among other things, outputs stories that feed the agile development effort. At the completion of the analysis iteration, the analysis team returns to the triaged customer requirements and repeats the process. Meanwhile, the development team is able to start work on a subset of the stories produced by analysis. The analysis and development efforts therefore operate autonomously, while maintaining a relationship through the latter’s dependence on the former. This has proven an effective method of incorporating the systems engineering discipline into the agile process, enabling it to feed an agile software development effort in a natural and meaningful way. We’ve seen this manifest itself as separate analysis and development teams, and as separate roles on the same team; both ways work.

Figure 2. Analysis Feeding Development

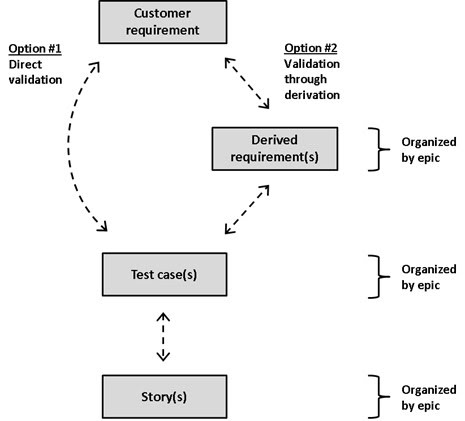

Central to the iterative analysis process is the validation of customer requirements with test cases. Our framework allows the analyst to derive one or more test cases that directly cover a given customer requirement. But if some amount of requirements decomposition is needed prior to the validation, the analyst may derive additional requirements to go between the customer requirement and the test cases. Regardless, the result is a set of well-defined test cases that trace back to the customer requirement. From there, the team defines one or more stories that capture the work needed to show that the product can pass each test case. As part of this process, any derived requirements, test cases, and stories are organized by the epics defined at the start of the project.

Figure 3. Analysis Feeding Development

This entire process is realized seamlessly within our configuration of IBM’s CLM suite. CLM controls the object content, organization, and linkages, and allows any stakeholder with proper permissions to navigate the information. But there are benefits to the process beyond the tool’s nice paper trail. For one, it’s the best release planning process I’ve encountered; deriving test cases and stories from a set of customer requirements is an efficient and natural way of defining a robust and comprehensive set of stories for a given release. It also promotes the practice of Acceptance Test Driven Development (ATDD), which ensures that stories have a clear definition-of-done relating to the validation of something the customer wants. And finally, the organization of the derived requirements, test cases, and stories by epic helps to ensure that the detailed product definition is consistent with the overall product vision, and enables the performance to plan calculations described in the next section of this paper.

3.3 Tracking performance to plan

Managers generally like the flexibility and focus of agile. But some worry that that same flexibility and focus will lead the team off course without any warning that the original project goals have been put at risk. This is an understandable concern, especially when the project is being done under a government contract in which the scope and completion date were defined at the start. This uneasiness may result in managers shying away from agile entirely, or structuring the project with a traditional top-level plan and reporting scheme, and allowing the team to somehow use agile within that. This latter scenario gets messy, necessitating awkward methods for translating between agile and traditional project management concepts. Worse, it limits the full potential of agile, which is a great help to managers that know how to use it.

So how does a project exploit the flexibility and focus of agile while providing an indicator of the team’s performance toward the overall plan? Traditional statusing methods tend not to work well for this, as they usually require task start and end dates to be defined too far in advance to really allow agile to breathe. We use a technique that provides a big picture comparison to plan without forcing unnecessary commitments at the task level – and it falls out effortlessly from the agile framework constructs previously described.

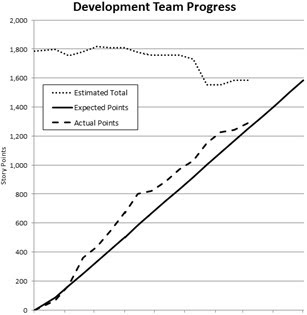

The technique employs something called a “burn-up” chart to show schedule performance for the project. Many in the agile community are familiar with burn-up charts and their usefulness in tracking the completion of product features. Our chart plots story points over time, and contains three different lines: one showing the estimated total number of points at project completion, one showing the number of points needed to be achieved to stay on schedule, and one showing the number of points actually achieved to date. (Another version of this chart shows a projected completion date instead of a fixed completion date, but is omitted here because government contracts are usually defined with fixed deadlines.)

Figure 4. Burn-Up Progress Chart

Recall from the earlier description of our requirements analysis process that we group our development stories by the epics defined at the start of the project. As such, we are able to count story points by epic. The initial estimated total number of story points is derived after a meaningful batch of stories has been defined across a subset of epics (e.g. after the one or few initial analysis iterations). As we execute the project, we use the roll up of completed story points by epic to count the total number of achieved points, and revise the estimate at complete for each epic as needed. We do all this counting and estimating by epic because the epics, which are defined upfront and outline the product vision, tend not to change much over the course of the project. Releases and other time based project milestones, on the other hand, are bound to change in response to the changing priorities of project stakeholders. We have separate charts for tracking progress toward near-term milestones, but our overall project schedule performance chart is purposefully kept milestone-agnostic through the use of epics. A project can thereby track overall progress to plan while still being agile in its ability to adapt to changing priorities and milestone dates.

4. Our Agile Framework in Practice

The agile framework described above is the closest thing we have to a standard approach to agile at the General Dynamics subsidiary that employs me. The abstract concepts are codified in a handful of different documents and presentations that are available on a company agile portal. The terms and process diagrams are included in proposals and engineering management plans. And the accompanying CLM tool configuration is used, to varying degrees of fidelity, by a healthy subset of projects that claim to be following the framework. That said, most of the more than 10,000 people in the organization are unaware that the framework exists, or that anyone has tried to chart a common course for agile for the company. Agile is still a new thing for a lot of people.

I’m not at liberty to share specific details or company metrics regarding the effects of the framework on our business, but I can speak in generalities. Our agile framework, and the experience we have built up using it, is certainly a valued asset within the company. It continues to spread throughout the organization, and is referenced as a competitive selling point in proposals. It has helped a number of projects be more flexible and focused while still meeting the standards of accountability and rigor expected on government contracts. It gives the organization a common, cross-project vocabulary for talking about agile to customers and management in a way that fits our business context. And the fact that it is a codified process with Chief Engineer approval gives agile an air of validity alongside our formally documented legacy processes.

For all of its successes, though, our agile framework has encountered a good deal of challenges. The framework tends to stir up the waters for those teams that fully embrace it. This is particularly true for teams that use the accompanying CLM tool configuration: proper use of the tool ensures the practices of the framework are followed correctly, but there is a learning curve, both in terms of tool usage and in how it forces people to change the way they work. We’ve made big strides in how we administer the features of the tool to new teams, and are continuing to find ways to make things easier to learn and use. But the tool is still imperfect, and probably always will be. A powerful tool can be a great asset, but it takes careful shepherding and continuous improvement to ensure that it doesn’t become a liability.

While the difficulties in transforming the internals of an organization are significant, the bigger barriers to agile for defense contractors may be the ways in which our customers require us to do business. I’ve recently gained a deeper appreciation of how hard it is to do agile in government work. After spending nearly fifteen years working on government contracts, I’m now managing internal IT projects, using agile to help transform the way technology products and services are delivered within my company. I can now work directly with customers to define their requirements on a release-by-release basis, instead of receiving a formal specification at the start of a project. I no longer have to figure out how to deliver thorough documentation for everything we produce, and can instead focus on recording only what is truly valuable. And I can demonstrate our work to users and adapt based on their feedback, without needing contractual approval to make important changes. These relaxed parameters haven’t lead us to cut any corners within our agile framework; our agile IT projects aren’t sloppy, they’re streamlined.

I think it’s important to acknowledge that the realities of defense contracting constrain agility. There are things about the industry that are probably not worth changing for the sake of agile, whereas other elements are ripe for reform. I think the optimal solution for the industry is likely an intelligent hybrid of commercial agile and traditional defense practices. In order to find this optimal hybrid (which is more than just new names for old things) we in the industry must continue to experiment with agile, to the extent that we risk making mistakes. To do this right, contractors and customers will have to make coordinated changes, which will be tricky. But we’ve proven, however modestly, that agile methods can make a real difference – even in the Defense Industry.

5. Acknowledgements

The evolution of our agile framework has been, and continues to be, the work of an organization, not of a single person. Chris Campbell and Pam Carmony, two General Dynamics Chief Engineers, were particularly helpful in codifying an agile approach that is both acceptable and useful to our business. George Hamilton and Dave Foster, two General Dynamics Engineering Managers, generously authorized the use of company overhead in support of our work. But I am perhaps most indebted to those teams that willingly tried the new approach, buying in to my sometimes mistaken ideas, and making the processes their own. Without the experience of real people practicing these methods, there would be no substance behind this paper.