When used purposefully and properly, assessments inform continuous improvements. Assessing an organization through their transformation, however, is not a one-time job, nor it is a “one size fits all.” This report is about an assessment I conducted as an external coach leading a small team, working with leadership at Company X. It will shed some light on whether existing assessment tools can be improved and attempt to address the ambitious question of whether only external teams can conduct accurate agile assessments.

1. INTRODUCTION

We live in a VUCA (volatility, uncertainty, complexity and ambiguity) world. Understanding how an organization is currently doing with their transformation journey is more valuable than trying to guess what will happen 4 years from the time their Agile journey started. When used purposefully and properly, assessments inform continuous improvement. Assessing an organization through their transformation, however, is not a one-time job, nor it is a “one size fits all.” It is an ongoing need that is required to measure progress. Yet too often we choose to use assessment tools simply because they are easy to use, they are accessible, or because they reinforce our illusions. Data collected with the tools to inform us on our agile maturity are useful for informing improvement efforts. However, unless paired with appropriate impact measures, the collected data points can lead to distorted results. Often, they do not focus on the difference that some practice changes have made for individuals, teams, or organization as a whole, from a learning culture point of view. As an example, when you set up a training program as part of your transformation, how do you know you have created a learning culture? This is more than answering the question: “I have attended the training X.” My point is, there are several things that can be missed when using assessment tools.

Without putting the assessment tools on trial, my story will tell whether tools are only as good as the people using them. I will also look at what was missing from a governance support point of view, and how data was being interpreted. Sometimes data collected from the tool was used for a different purpose.

This report describes my experience through a consulting engagement with Company X, to perform an assessment. I will highlight what worked and what did not throughout their agile transformation journey. Apparently many leaders in this company were frustrated by their inability to communicate the progress being made and how their programs and teams were contributing (or not) to their business performance as sold to them by some agile evangelists. Before engaging two colleagues and myself, they told us that they had used an Agile Health Radar (AHR) assessment tool [AHR] the last 3 years. Even though there are many reasons why companies need an assessment, experience with this organization has led me to the strong conviction that the AHR tool was not sufficient to provide a good overall picture of their agility.

Moreover, there is “no one size fits all” assessment tool. I will explain how assessments at different levels of Company X’s organization required different ways of assessing in order to not lose sight of the systemic view. Some tool vendors have attempted to have several versions of their tool that could apply to teams, programs, or simply specific solutions (for example, Tool for DevOps). Again, this tells me that things are often taken in isolation when using several versions of the same tool for the same company. For example, if I am assessing the maturity of my teams in terms of their DevOps solution, why is the tool only geared towards that solution and not looking at the larger “whole”?

Having established a solid record of accomplishment of thought leadership as an agile coach, servant and skilled leader and methodologist, I have led successful large-scale agile transformation projects, supporting large organizations worldwide with adopting agile and lean practices. When it comes to understanding how organizations are moving toward their agile journey, things are not considered in isolation. In my years of consulting engagements, I provide well thought out effective advice to organization seeking improvements. My passion and my ability to listen and observe has helped me engage with clients and to earn their trust when it comes to performing assessment

2. Background

Company X is a recognized leader in business outsourcing, with a commitment to success and a record of achievement. With more than 14,000 employees serving more than half a million small- to medium-sized businesses US nationwide, their reputation is at stake if they do not meet their customer’s needs. They commenced their agile journey in 2014 by taking baby steps and carried out several agile initiatives over the next 5 years. Some were successful, others not so successful.

Their agile growth was highlighted by the creation of an agile Project Management Office (PMO), by some investments in terms of training, and recruiting scrum masters, with only sporadic support from leadership. As the number of “agile” teams grew exponentially, however, their leadership lost track of how well the teams were doing and how mature they were. If leaders do not know where they are as an agile organization and how well the teams are doing, this means they may have missed the mark and lost sight of the vision and their initial transformation road map.

In 2018, the leadership of the Company X enlisted myself and two other coaches to support them in their new initiatives, which included improving measurements, creating transparent governance mechanisms, and focusing continually on process improvement. I acted as the lead coach and guided our coaching team through the steps of our assessment process and also providing oversight.

Initially we were tasked by the company’s leaders with offering them more insight as to how teams, IT governance, and IT as a whole were progressing in achieving their transformation vision. To summarize in few words, they had not seen any tangible improvements. In my opinion, the entire company suffers when the executive team does not see value in their agile transformation.

After observing 15 hand-selected teams, we found that those ranked as high performing were actually struggling with adopting basic agile practices! Clearly, something had gone wrong in the original assessment using the Agile Health Radar (AHR) tool. Was the data collected from this tool used perhaps for a different purpose?

Our team spent 8 weeks completing the assessment, documenting the findings and observations. Our approach was thorough and quite different from the results of using a standard assessment tool. Rather than relying solely on the latter, we did many face-to-face interviews, shadowed and attended team and leadership meetings, spent time speaking with key players, and analyzed their reports and team dashboards. Having the narrative outcome from people and focusing more on relationships and conversations, we were able to paint a much more accurate picture of the current environment. Furthermore, we also dissected the governance layers and talked to leaders, understood their leadership as it pertained to supporting, enabling and managing teams, as well as their expectations.

3. Our Team Approach

At Company X, our assessment activities were handled in an agile and iterative manner, using as a frame of reference the DAD (Disciplined Agile Delivery) lifecycle [DAD]. We experimented with iterations in which we scoped our work into chunks of activities and performed a retrospective at the end of each iteration. A work stream of assessment activities as part of our backlog was created during the Inception stage. Our focus was on building a common understanding and developing leadership consensus around a mutually agreed upon improvement items backlog.

The content and structure of any assessment always depend on the scope and diversity of assessment frameworks, tools, technology and practices, as well as identified gaps and opportunities for improvement. I have performed several assessments in the past 9 years, and each context is different. There is no way we can duplicate the effort and proceed in the same way from one organization to another. Assessments are also different from one organizational entity to another, for example for an entire Organization, for IT, for product teams, for project team’s assessments, for specific programs (traditional, bi-modal, or agile), for portfolio, or for each of the SAFe competencies. An agile assessment allows evaluating how those entities are doing in their agile journey.

Let us look at the different stages and provide some details on what activities were performed, and the outcome of each stage.

3.1 Assessment Inception: Preparation and Planning of the assessment

To form a clear picture of the expected outcome, initially we worked with the pre-sales and sales to understand the context of the ask. When an assessment is considered, we have a few calls with the client to ensure we understand the goals for this assessment and the context. We also had a discovery session with the company leadership. No details can be dismissed at this stage. We used the Disciplined Agile approach (DAD) approach to perform the assessment. As per the DAD inception guidelines, our goals were to:

- Form the assessment team (our coaches as well as the core team from the customer who were designated to help us throughout)

- Decide about the process (what approach we were going to use, the policies such as the Definition of Done (DoD) and Ways of Working (assessment team working agreement), the ceremonies and events to be conducted and areas of assessment)

- Understand the tooling, the existing automation. Understand the reporting and measurement strategy

- Put in place the conditions that were needed to successfully conduct the assessment (supporting documents, questions, working environment, access, etc.)

- Populate the initial backlog by fully understanding the context and the different groups/structures that needed to be assessed (teams, programs, governance, etc.)

- Create a rough plan for conducting the assessment and gain a consensus on what will be the outcome of the assessment.

Along with the prepared list of questions to see how a particular group is performing, we added questions for each of the five focus areas including process/practices, people, technology, reporting/metrics and organization dynamics.

During our planning, it has been vital to understand who is taking part in the assessment. The various governance layers, including the agile center of excellence, the project management office (PMO), the portfolios, programs and a number of teams were designated to be part of the endeavor. The organization agreed with us to poll leadership at the beginning of the process to identify relevant structures, people, and identified teams.

There were things about governance, programs, teams and other organizational level assessments that needed to be fleshed out, since each group required different interview questions, techniques, and expectations from the customer.

At the end of the inception, we performed an “Inspect and Review” to understand both our readiness for the assessment and gain consensus on the start date of the first Iteration. Among the outcomes of that review were:

- A schedule for interview sessions that was solid enough for the first iteration but forecasted for the rest as things may change (such as availability of people, etc.)

- An initial backlog of activities

- A set of initial interview questions based on the “STAR” method. This helped discussing the specific Situation, Task, Action, and Result of the situation that was being described

- Consensus on the backlog, the process, and final assets to be delivered to X company executives.

4. Performing the Assessment

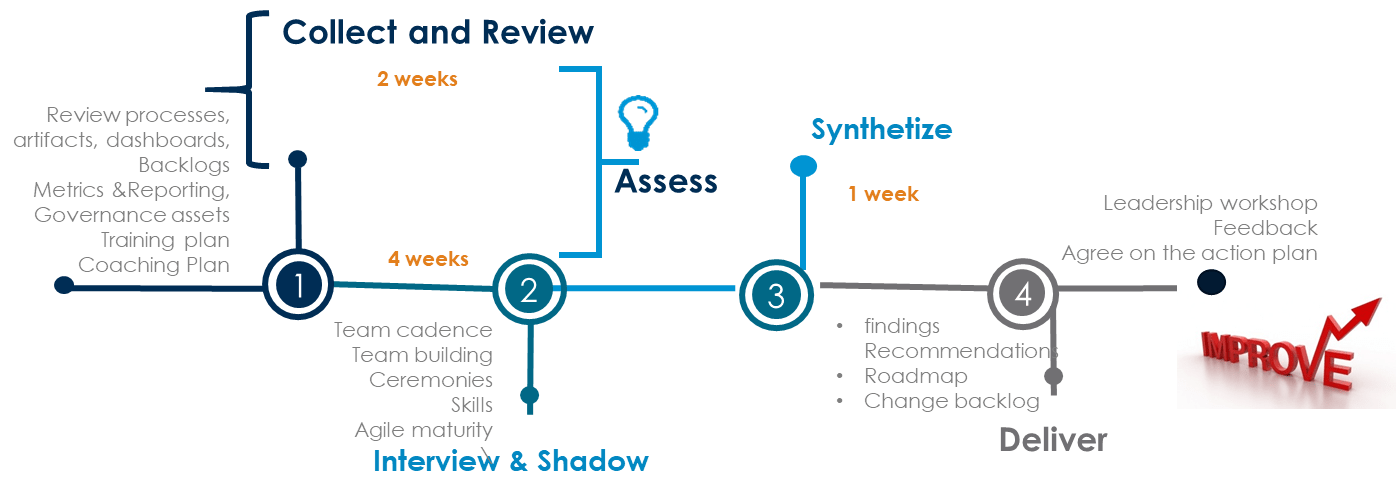

Figure 1. The Lifecycle of an Assessment

Figure 1. The Lifecycle of an Assessment

4.1 Data Collection, Interview and Shadow

4.1.1 Review Assets (within the PMO, the Agile Center of Excellence)

This review is contextual. It is not intended to provide in-depth information on individual frameworks, tools, and methods, assets created as a result of following a prescribed framework, or to assess their quality (well written vs. serving a specific purpose). Rather, it is intended to give a broad overview of the current state of the Company adoption, regardless of any approach that has been used. If an assessment of any framework usage (for example, to review if SAFe has been properly adopted) has been requested, the data collection will include whether teams or team of teams are moving towards the adoption of such a framework or struggling.

4.1.2 Review the Governance Model

It is of utmost importance to understand the existing governance model. The process of decision-making, the process by which decisions are implemented and the alignment of an initiative (project, program or product development) with organizational goals to create value, can make or break your agile implementation. Traditional governance typically focuses on a centralized decision making, command-and-control, activity and documentation-based approach. Teams are expected to adopt and then follow corporate standards and guidelines, to produce (reasonably) consistent artifacts, and to have those artifacts reviewed and accepted through a “quality gate” process. On another hand, an agile governance is participatory, consensus oriented, accountable, transparent, responsive, effective and efficient, equitable and inclusive. Agile governance is the application of Lean-Agile values, principles and practices to the task of governance. So what happens when an organization keeps its traditional governance when undergoing an agile transformation? The result is a governance façade that often injects risk, cost, and time into the team efforts, regardless of how agile teams may be: the exact opposite of what good governance should be about.

Artifacts created by teams or managers concerning program and delivery management, are also of vital importance as they help to share or “radiate” information. Some planning artifacts including the product backlog, the Iteration backlog, burn down charts, and product increments were in the scope of the assessment.

4.1.3 Review of Roles and transition to agile roles

We wanted to understand if the company prepared the transition and created any personas for the agile new roles. How well was the transition made? Did they have a map for easing the transition from traditional roles to agile roles? Alternatively, did they rely on some job descriptions and fill the servant leadership roles by recruiting contractors? How have traditional managers adapted to be part of the agile organization and how have they handled their everyday management challenges, given the agile disruption.

4.1.4 Interviews and Shadowing

Portfolio and Programs Management (PPPM). It was important for us to know the “modus operandi” of the PMO. Was it supportive of the agile transformation or rather an inhibitor of some of the changes? How were all the underlining processes (Funding, planning, compliance, risk management, etc.) working together towards achieving results. What were the standards that were reinforced at the team level? How does PMO ensure that the scope and benefits have been delivered as agreed? Are backlogs created at all levels? Does the tool provide transparency from the deliverable to epics to user stories?

Teams. Given the agile leadership roles were fulfilled, at the team level, how was each role performing? We needed to figure out the experience, the competencies, the opportunities for improvement and identify any gaps. We observed their ceremonies, the current practices in terms planning, story writing, refining their backlog items, handling interruptions, and handling changes. We reviewed their story/tasks dashboards and understood the daily management of those boards. We needed to understand what metrics were being collected and what reporting strategy each team had. For example, whether some reports were shared with the management or not. We paid attention to teams perceived as being “high performing” and validated whether or not they indeed were.

4.1.5 Review of the assessment tool results and the conditions in which the tool was used

We wanted to know what was being communicated about the assessment itself. Were teams aware of why they needed to be assessed? Were they ready and prepared? Were they fully engaged to participate in this assessment? Last but not least, did the teams know that they were put into different buckets (high performing vs. average vs. non performing teams)? And what data was used by the PMO to decide on the different classes for team performance?

4.2 Synthesize and consolidate

The cycle described in Figure 1 (Collect, Interview, Shadow and Synthesize), was repeated each iteration. Consolidating our findings each iteration helped us understand our gaps, and adjust the next iteration backlog. During the last iteration planning, our goal was to focus solely on the creation of the deliverables: Our findings and recommendations. The findings report was one of the outcomes of the assessment; in it what the data revealed or indicated was highlighted. We summarized the main points into 5 categories: organization and governance, process, people including leadership, tool technology, reporting and metrics. We made a call on implications when appropriate. My intention is not to provide the full report of findings but show some examples to illustrate the number and types of improvements that were recommended.

4.2.1 Findings for governance, portfolio/programs and leadership support

Prolonged sign off and approval, a legacy bureaucracy driven by desire to manage risk and compliance characterized the current governance. Compliance is demonstrated through documentation, end stage tool gates and checklists. Centralized decisions were made mostly at the steering committees levels. This may be the cause for lack of ownership and accountability.

The PMO was the control mechanism for portfolio, project, and program managers. The goal of the PMO was to ensure standards for solution delivery are met for quality and compliance. With good intentions of mitigating risks, governance and compliance activities were definitely slowing down the effectiveness of agility. Even with the help of Scrum masters, the current governance layers above teams were hindering the efficiency of those teams. More, Managers were not empowered with responsibility and authority to fulfill their mission. Program managers were promoted from their project management duties. They kept their command and control hats and lacked true leadership mindset to be able to support fully the agile adoption and their teams. What else did not help: the decision-making was centralized at the steering committees level.

4.2.2 Findings for teams

Teams were taking short cuts. They were not doing reviews. They were skipping retrospectives. If some teams were performing retrospectives, they were never acting on the improvement items as no one took responsibility for owning those items. A few teams struggled to meet their Iteration/Release goals, which caused friction with the managers. Some teams were doing context switching and moving from one story to another. We also paid attention to interactions between teams and managers, between team members, and across teams. In particular, we looked into the psychological safety, group learning, and peer feedback. Without going into the details of our observations, let’s just say some were very positive too.

Now findings about the company’s usage of the assessment tool: We compared our findings with what managers concluded after getting the results from the assessment tool. Our analysis searched for patterns that emerged from the findings and found that teams classified as high performing were far from being high performing. Obviously, our assessment contrasted with their categorization of teams. Why that happened? Was it a bad interpretation of results from managers? Categorizing teams into different buckets was not at all the outcome that one was expecting from the usage of an assessment tool. This finding was one of the motivating factors to submit this report proposal to the Agile Alliance conference.

One of the driving factors behind an assessment should be the goals and the will to improve, not the fear of measuring how bad the team is doing. What happened in this situation? I felt managers did not have the means to measure the performance of their teams, so they preferred to choose the wrong data. Without going far into blaming the management, I will take you through my analysis in Section 5.

4.2.3 Recommendations, Action plan and improvement steps

The data collected—our observations and findings—were sliced and diced to deliver valuable recommendations. We developed the recommendations in another report; all of them were actionable. They followed the same categories as the findings (organization/governance & leadership, process, tools technology, metrics and reporting). We presented recommendations as a ranked list, meaning that we presented items that we considered most relevant (top and medium priorities) first. Those priorities were based on several factors we discussed with the Core team, i.e. the ones that had most value-added and most urgent to be acted on.

From these recommendations, we populated a backlog with change and improvement items. As the last step of our engagement we performed a Leadership Workshop with the Core Team to review and prioritize the backlog and review the DOD. We scheduled a session of 4 hours to gain a common consensus and engage in creating an action plan for the next stage. The workshop was also an enablement session for leaders on how to support and better serve their teams and the expectations from this collaborative interpretation of results. We categorized recommendations into strategic and tactical improvement activities, created a visual future-state road map of prioritized items and understood budgets impact and other considerations.

Overall the assessed organization was satisfied with the outcomes and felt they had gotten the best “bang for their buck.” This experience generated for me a number of personal insights about assessments activities and in particular what to expect when using external consultants and potential pitfalls when any assessment tool is used.

5. Assessment Options

There is no shortage of assessment options available. There are tools as well other methods that you can use. How can you judge the value of any approach to help you evaluate how your teams or entire organization is doing on your agile journey? How can you reasonably choose an appropriate assessment option to use for your organization? I will reflect on some options, the pros and cons of each, and the differences between them.

5.1 Assessment with tools: What to Expect

A search of the Internet yields plenty of agile or lean assessment tools. The first thing to understand is whether a tool is good enough to provide an accurate assessment. While many of these tools are excellent, they aren’t helpful unless you know what you should be assessing. Tools can help overcome geographical boundaries but they still have some gaps. How can any assessment tool vendor take into account of all the contexts that we face in different organizations?

More important, assessments are mostly about people. Unless we understand the foundation and critical success factors of embracing an agile mindset and living the agile principles in our daily project life, we remain fools. Until then, the saying “a fool with a tool is still a fool” holds true. Back in 2008, when I first used self-check agile assessments, all I had was a sophisticated excel tool. It fulfilled its purpose at that time as it guided us in understanding whether some teams were adopting some agile practices and how well they were doing. This tool gave me the power to customize it based on the situation I am in.

Today, there is a profusion of tools such as Agenda-shift, Comparative agile, Agile Fluency Model, AHR, and more. A more complete study (outside of the scope of this report) would look at a number of possible benefits and pitfalls of each assessment tool. In my story, AHR was in the picture. Let me share my analysis of the benefits, possible drawbacks and misuse of that tool.

Tool conception: In each category, a number of questions were asked. The responses were selected based on a scale of 1 to 10. Already, I see that a measurement system based on the Crawl-Walk-Run-Fly approach may not work in all situations. It can be applicable to a small subset of stable teams, but definitely not appropriate for strategic transformation because of the imminent danger of the competition transforming faster than you to better serve (and thus take) customers in the marketplace. In addition, for the teams, two results are captured: one is related to the agility of the team, and the other to their performance. Both agility and performance are measured using the same metric system (Crawl-Walk-Run-Fly). In my opinion, I do not see performance measurement following the same pattern as agility.

Tool results interpretation: By essence, agility relates to teams and members having the skills set and competencies as well as a demonstration that they are applying those skills. However, if I am crawling instead of running from a performance perspective, it looks bad in the eyes of managers. To me it is meaningless from a performance viewpoint. What is crawling or even running/flying from a performance point of view? I see this as one reason why we found managers took the liberty to put people in different performance buckets. As stated by Stacey Barr [Barr][AHR], common usages of performance measures are to judge people’s performance, hold people accountable, report upwards to management or leadership, or to jump through bureaucratic hoops (we just have to have them, no thought about why). I expect this is not something any tool vendor would have envisioned. So my take on this: without a strategy for how to use the tool, you end up using it poorly. As mentioned above, you tend to judge people and that was a demonstration of my above hypothesis.

Tool results used as a substitute for governance: Good governance defines the performance measures for teams and programs and does not rely on tools to support the performance review process and employee engagement results. If we want to correlate agility with performance, one way to do so is to back our conclusions with some data. In any assessment let’s not dismiss the power of metrics that are collected on a regular basis in real time (for example, number of defects, number of completed stories, etc.).

This organization was using the tool for team’s assessment every three months (when all went well). In my view, long stretches between assessments are deadly because problems in the process may go undetected for too long (yes three months is too long in the life of an agile team).

Furthermore, when an organization experiments with agility at the team level, if they also have not transformed the roles of managers, this may lead to a situation where managers use assessment data to punish or reward teams. Along with this, if a culture of continuous improvement is not firmly established, you will end up with results opposite from what you hoped for.

Moreover, while tools get all the fanfare, it’s the holistic perspective of an organization’s current state that matters. The teams’ ecosystem matters most. If you need to assess this ecosystem, you may end up using more than one tool in your model. Not only this can be overwhelming but also you will not have an accurate picture of your overall reality.

Often, tools generate data but the team takes no actions. As Sally Ellata states: “Measurement with no action is worthless data.” [AHR]

You choose one answer from another or answer yes or no to a question. Assessment tools become a substitute for surveys and by their very nature lack depth to the answers. This may lead to biased responses.

Results are supposed to be anonymous from one team to another. Often, managers do not follow the rules and make them visible which leads to them comparing one team with another (shame!).

If teams do not have the right and enabling conditions, answers can be gamed depending also on who provides the answers (for example Scrum master vs. team member). Lack of sense of purpose and compelling direction (why?), lack of safety net and when managers are not ready to hear some of the feedback are examples of conditions that do not promote supportive context.

5.2 Overcoming tool pitfalls

I have some thoughts on how to overcome potential tool limitations. First, provide awareness on what continuous improvement means at the team level and at the organization as a whole. Agree on norms that discourage destructive behavior and promote supportive context when using a tool. After all, a tool is only a tool. It is better to focus on using tools to build relationships between people instead of the tools themselves. Use the tool for the purpose it serves best. Tools provide a forum for the team to take a closer look at themselves along with their stakeholders and prompt improvements and changes. Use the tool as only a reflection tool for the team to reach to the next level of agile maturity. This is what matters most. Encourage looking at the correlations between the tool results and the actual data (development metrics, etc.) being collected during delivery. An experienced coach can help with this. And if you do work with a coach, work with one who will suggest several options for changes that will help you meet your organizational goals.

Finally, recognize there are some things you cannot directly measure with a tool: Team cohesion, bonding and teambuilding, successful teamwork, open communication, true people accountability and ownership, and alignment of team goals with overall business strategies.

5.3 Hiring External Consultants for the Assessment

There are benefits to using consultants to conduct assessments instead of only using a tool. Consultants are not limited to the number of techniques they can use. They can choose accordingly. They can give a clear picture of the current situation by combining assessment techniques such as observing/shadowing, reviewing assets, as well as conducting group and individual interviews. With consultants, not only do you get more complete data—shining light on both challenges and on blind spots—but they also can reveal where there is successful teamwork, open communication, true people accountability and ownership, and bonding between people. With consultants, the desired future state can be expressed with clear recommendations given the known constraints of the organization and the teams.

An assessment is most useful when it is a “joint discovery” having the leadership team involved with consultants and guiding them throughout. Instead of consultants calling “their baby ugly,” together with leadership you can jointly come to a consensus on what challenges teams have and how to overcome those challenges.

A consultant takes a holistic approach. Learning about the current situation emerges through systems of relationship with others. Understanding takes place and is facilitated by the process of peeling additional layers. When as a consultant I assess a team or number of teams, I am not limited in the amount of data I can collect. Moreover, systems thinking is definitely used by experienced consultants. This is key to move through and understand the layers of the organizational system as you learn more about the current situation and see what aspects of the organization influence the current operating model of the teams. Sometimes the same factors can be barriers to the success of the teams, at other times, they are enablers and positively guide/support them.

There can be also drawbacks when using consultants. Some organizations want to be told what to do instead of collaborating with the consultant to find out the situation and come to joint conclusions. If that is the case, maybe they are not ready for the assessment. If the assessment goes beyond 6 weeks, it can be expensive. It is worthless for an organization to pay for a separate assessment engagement and then request coaching long after the fact. Combining an assessment with some coaching is a better investment (while assessment happens, you can also coach and help teams improve).

When push comes to shove, and after all is considered, no matter of what option you choose for assessment; most important to success are:

- A readiness for continuous improvement. Managers, Teams cannot be inspired if they don’t know what they’re working toward, and don’t have explicit goals.

- Engaging people through continuous improvement. Collect metrics that matter most, and do not skip your inspect and adapt sessions, using reflection tools and acting upon any results.

- A continuous feedback driven, supportive context is particularly critical to team success. This can be enabled through continuous improvement practices such as retrospectives at all levels as well as peer reviews.

Often a combination of options (consultant based assessment and the usage of tool) is recommended. Regardless, it is imperative to have the leadership support so they can communicate expectations, provide clear direction, orient teams and align them with the purpose. Finally, to promote a creative work environment, celebrate people and their work, promote learning, and reward improvements.

6. Conclusions and Lessons Learned

This experience allowed me to discover what the prerequisites for an effective assessment are, as well as tips on how to optimize the usage of given assessment tools. In addition here are some strong beliefs I have. DO NOT use an assessment tool as a substitute for governance. What good is a quarterly assessment using a tool if there is no established sense of purpose for agile adoption and you do not have a continuous approach that will constantly look to make improvements? What good is an assessment if you left your adoption to luck without continual leadership support? To me, setting expectations for teams and keeping the leaders responsible and involved, is central. What good is a point in time assessment if you already take short cuts with your retrospective and reviews sessions or do not invest time in acting on your improvement items and reviewing feedback? Do not use the agile assessment tool to EVALUATE and SUPPORT the performance review process and employee engagement results. By doing so you have already given space for gaming the results.

Tools are context free. Mostly they know about what it is to do ”agile,” but not so much about what it is to be “agile.” The latter is context sensitive. That said, I also learned we should not solely rely on tools because there is a “human” aspect that greatly affects the outcome of the results. Among other things, we discovered that if you are really doing an assessment, you want to take your agile skills to the next level of knowledge and understanding. However, in my view, tools focus mostly on knowledge and less on understanding, assuming that knowledge is a matter of fast retrieval of facts. Understanding is simply ‘standing under’—an experience of knowledge in an intimate way. This can only be achieved with a human intervention.

7. Acknowledgements

I am so grateful to my shepherd Rebecca Wirfs-Brook for helping me bring this report to life. Thanks ever so much for your relentless advice and guidance.

REFERENCES

[AHR] Sally Ellata. Agile Health Radar. Website: https://agilityhealthradar.com/Agility

[Barr] Stacey Barr. Practical Performance Measurement. The PuMP Press, 2014.

[DAD] Disciplined Agile Delivery. Website: https://www.pmi.org/disciplined-agile/process/introduction-to-dad